Spring 2024: Miles B. Vance

Frames of Death: The Rhetorical Goals of Official Social Media Posts in the Russo-Ukrainian War

Miles B. Vance

Cinema and Television Arts, ŗŚĮĻ²»“ņģČ

Submitted in partial fulfillment of the requirements in an undergraduate senior capstone course in communications

Abstract

The prevalence of social media in daily life has made a substantive impact on the fields of propaganda and population manipulation. This phenomenon has manifested most significantly to Western audiences in the context of the 2016 United States presidential election, where Russian misinformation campaigns were used to promote the efforts of eventual president Donald J. Trump. Additionally, in recent conflicts around the world, social media propaganda has been used alongside traditional propaganda as part of a mental warfare campaign. The most recent example of this can be seen in the ongoing phase of the Russo-Ukrainian War, beginning after Russia invaded the east of Ukraine in February 2022. This research examined examples of social media content posted by Russian, Ukrainian, and Wagner-affiliated social media channels and analyzed the content for rhetorical goals and devices. The study employed the analysis of two videos from each side for three different events: the fall of Bakhmut, the Kerch Bridge explosion, and the one-year anniversary of the invasion. This leads to a total of 18 individual posts, with an additional two images analyzed concerning the advent of drone video posts. The results of this study show that the most common themes of social media content were legitimization, deflection, humor, and violence. All three sides appear to use similar tactics, although Russian channels often deign to engage in posting directly violent content. The motivations of these channels are to engage the viewer and either uplift or dismay them.

Keywords: Hybrid warfare, Russo-Ukrainian War, propaganda, social media

·”³¾²¹¾±±ō:Ģżmvance5@elon.edu

1. Introduction

This research analyzes the online social media content posted during the ongoing Russian invasion of Ukraine, which is part of the decade-long Russo-Ukrainian War. The research revolves around three distinct entities posting videos and images of the conflict: The Armed Forces of Ukraine (ZSU), the Armed Forces of the Russian Federation (VSRF), and the Russian Private Military Contractor known as the Wagner Group. Included in the selection of Ukrainian sources are units such as the Third Assault Brigade, which is a Ukrainian military division comprised of soldiers belonging to the Azov Battalion, a far-right third-party militia in Ukraine that has been incorporated into the Ukrainian Armed Forces. The purpose of this research is to qualitatively examine the online content produced by these entities and analyze such material for elements of narrative, tone, framing, music, and internet memes, all of which construct a form of postmodern psychological warfare known as Hybrid Warfare or Information Warfare. In previous conflicts, propaganda leaflets have been airdropped to enemy combatants and civilians. The internet provides a new medium for spreading propaganda and false information, which may greatly assist a countryās war effort through influencing the civilians and soldiers of both their homeland and their opponents.

II. Literature Review

In the years since the conclusion of the Cold War, information technologies such as the internet, computers, and cell phones have rapidly advanced in ability, speed, and prevalence in daily life. It is now almost inevitable that any given person in a developed nation will be in possession of a device capable of connection to the internet. This era of hyper-connectivity creates new possibilities for the use of information in warfare. The concepts of hybrid warfare, information warfare, social media, and their roles in the ongoing Russian invasion of Ukraine will be discussed in this literature review.

According to Danyk and Briggs (2023), āTools of information perception and manipulation can be used to achieve various political, economic, military, and other goals, which in some interpretations is a form of preventative defenseā (p.35). The integration of such ātools of information perception and manipulationā into warfare is part of a phenomenon referred to as āhybrid warfare.ā Hybrid warfare has existed as a phenomenon since the 1990s and will remain relevant in conflicts for the foreseeable future (Danyk & Briggs, 2023). Hybrid warfare is utilized by countries the world over, and it is useful because āThe ambiguous nature of (hybrid warfare) and its use of ambiguous modes such as insurgency and terrorism allow a belligerent to minimalize targeting opportunities by denying the opponent the ability to utilize its advantage in firepowerā (Brown, 2018 p. 62). Put simply, hybrid warfare seeks to circumnavigate material or numerical disadvantage through alternative means. These methods can include information warfare, cyber warfare, guerrilla-like infrastructure attacks, or any other number of alternative attacks on an adversary. Russia has been noted for its usage of the concept in its military interventions in Georgia and Ukraine (Brown, 2018), and this is part of Russiaās larger attempt at utilizing hybrid warfare āin its pursuit of dominance in the Eastern neighborhoodā (Ratsiborynska, 2016 p. 18). This research focuses on the use of information and misinformation within hybrid warfare, particularly in the form of posts from Russian and Ukrainian sources on social media.

In the 21st century, social media is an increasingly important tool in agenda setting, which is the act of determining which issues will be important to the public (Feezell, 2018). Agenda setting on social media is particularly potent against those who are typically āuninformedā regarding political issues (Feezell, 2018). Prier (2017) identifies social media as a new and powerful tool in the field of information warfare, noting that the platforms ācould be used as a weapon against the minds of the populationā¦ he who controls the trend will control the narrative ā and, ultimately, the narrative controls the will of the peopleā (p. 81). Russia has long been a pioneer of this method, and perhaps its most visible efforts from a Western perspective can be seen in the 2016 U.S. presidential election, in which Russian information weapons systems directly targeted American users of social media websites to achieve the goal of spreading disruption, false information, and distrust (Hanlon, 2018).

BiaÅy (2017), borrowing from Ben Nimmo, has identified four main ways in which Russia has used social media to spread misinformation and sow distrust. These are: dismissing online commentators by attacking their credibility or factual statements, distorting facts with misleading depictions and contexts, distracting the audience by arguing alternative lines of reasoning, and dismaying the audience with frightening content. This final tactic of dismay has been used heavily in the ongoing conflict surrounding the invasion, with thousands of horribly gory videos of drone bombings, civilian deaths, and other realities of war proliferating social media feeds. Ross and Rutland (2022) have identified everything ranging from āWords, tweets, TikToks, Instagram posts, drone recordings, and any other microtarget-enabling media deemed āview-worthyāā (p.222) as the primary āweaponsā of information warfare. Ross and Rutland further urge the U.S. Army to develop āappropriate doctrinal changes related to information operations, public affairs, and cyber space operations.ā (Ross & Rutland, 2018 p.222).

Russia has been extraordinarily proactive in its use of information warfare in the ongoing Russo-Ukrainian War, only increasing its efforts after the 2022 invasion of Ukraine. Mullaney (2022) has performed valuable work in quantifying and describing Russiaās perceived goals in their social media war campaigns, and notes that the current goal is to pressure the local population, distract civilians from facts, and actively deceive them with false information and skewed perspectives. Mullaney further explains how Russia targets users, remarking that āthe Russian domestic audience remained the most important target throughout the warā¦ (Russia) targeted vulnerabilities within its society and sought to legitimize the warā (Mullaney, 2022 p. 199). This shows that information warfare, especially when viewed through the lens of social media, can target multiple audiences. Propaganda is no longer a home effort, as the same posts can be viewed by both Russian patriots and Ukrainians who may doubt their governmentās legitimacy. Indeed, a popular topic addressed by Putinās regime seems to be the supposedly Nazi-infested parliament of Ukraine. This rhetoric is likely used because it targets both Russians and Ukrainians as an audience and appeals to a commonly recognized evil entity.

One interesting aspect about the Russian use of hybrid warfare in the ongoing conflict post-invasion is that Russia has not used cyber-attacks to target the internet. Russia has occasionally targeted civilian power infrastructure and governmental infrastructure, but in a much more restrained and noninvasive way than theorists of cyber warfare have previously predicted (Lin, 2022). This lack of direct attack could be because Russia lacks the capabilities to engage in cyber warfare on a scale previously approximated, but it is far more likely that Russia wants Ukrainian civilians and soldiers to continue to have access to power and internet so that those individuals can be exposed to the Russian information warfare systems. If Kyiv were to āgo black,ā the Russian efforts at dominating social media would be worthless. It is possible that Russia will use cyberattacks to target substations and internet/cell towers in a retreat scenario, as was seen with the Chernobyl area. However, this remains to be seen, and for the time being, Russia appears perfectly content to keep Ukrainian internet active.

The current field of research is exhaustive in its examination of Russiaās use of hybrid warfare, including the aspect of information warfare on social media. However, the existing work has not fully examined Russiaās invasion of Ukraine, the latest iteration of the Russo-Ukrainian War. Furthermore, current research neglects to consider Ukraineās efforts at social media information warfare and related hybrid warfare efforts. This may be because Ukraine has largely mirrored Russia in its efforts, but this research seeks to investigate Ukrainian voices alongside Russian ones. Finally, the current field of research has given little consideration to the rhetorical devices employed by wagers of information warfare on social media platforms. This research seeks to fill the gap in existing study by providing a current and relevant investigation of the unique rhetorical devices employed in social media content by Russian, Ukrainian, and third-party forces.

Research Questions

The following questions will be the focus of this research project:

RQ1: What specific methods and rhetorical tools are used by Russian, Ukrainian, and PMC forces to frame the war in video social media content?

RQ2: What similarities and differences exist between Russian, Ukrainian, and third-party forcesā methods and video content?

RQ3: Why do these forces make these rhetorical decisions? What benefits might their unique utilizations of framing offer in advancing their narrative?

This research is timely because it seeks to analyze an ongoing conflict for the rhetorical goals present in social media videos. Previous research has examined Russiaās engagement with hybrid and information warfare, but it has not fully examined the latest iteration of the Russo-Ukrainian War beginning with the 2022 invasion of Ukraine. Furthermore, existing research does not tend to focus on the specific visual and rhetorical elements utilized in propaganda posts. Finally, the current scope of research studying information in the Russo-Ukrainian War focuses greatly on Russia but fails to adequately explore Ukrainian and third-party attempts at propaganda and information warfare. For these reasons, this research addresses important and neglected topics in the fields of information warfare and propaganda.

III. Methods

This research uses qualitative content analysis to identify the specific visual, aural, and narrative elements comprising Russian and Ukrainian attempts at propaganda. Rosenberry and Vicker (2021) define qualitative content analysis as a form of research where āthe researcher āinteractsā with the documentary materials to analyze the documents and better understand their meanings in contextā (p. 228). Qualitative content analysis is useful for this research project because it allows the direct analysis of Russian and Ukrainian social media posts in the context of the larger conflict, which is part of a war stretching back almost a decade. Qualitative content analysis also allows the researcher to examine these posts within the content of the surrounding battles and āflowā of the conflict in the days and weeks leading up to each post, which influence the ways in which these entities choose to portray the conflict. Rosenberry and Vicker (2021) also state that the specific content selected for investigation must be āselected for conceptually or theoretically relevant reasonsā (p. 228).

This project has selected videos posted in the aftermath of three events: one Ukrainian victory, one Russian victory, and the one-year anniversary of the invasion in February 2023. These have been selected to ensure a wide range of content to understand the ways in which each side frames (or ignores) victory and defeat. Deliberate selection within specific events is being used instead of simply selecting random posts to ensure that rhetorical elements are present in the videos. By remaining selective in determining the sample, this research attempts to avoid any indeterminate elements, such as leaked videos or heavily reposted and re-edited content. In this project, the specific qualities that are examined include the video, audio, editing, tone, and overarching narrative of each post. All these elements combine to create the unique profile of each post, which in turn aims to form or modify the audienceās perception of the war.

The specific social media websites that are used in this research include, but are not limited to, Twitter, Reddit, Telegram, 4Chan, and WhatsApp. Telegram and 4Chan have been selected because they are the most accessible āWesternā based websites that still host pro-Russia propaganda videos. Telegram especially appears to host many Russia- and Wagner-affiliated channels. Twitter, Reddit, and WhatsApp are useful sources because they serve as content aggregators, with several pages, such as Redditās r/CombatFootage, dedicated to reposting war footage and propaganda for the purpose of increasing public knowledge or spreading a narrative. However, many videos and images found on Reddit, Twitter, and WhatsApp seem to originate from Telegram, so videos selected from these sources will be provided in their original Telegram context. Many mainstream social media platforms, such as Facebook or TikTok, have strict policies forbidding violent content, making analysis on their platforms difficult. To ensure a degree of randomness in sampling, two posts from each perspective will be randomly selected from a larger pool of deliberately selected content in the aforementioned contexts (being a Russian victory, Ukrainian victory, and the invasion anniversary).

IV. Findings

This study found that there are consistent and identifiable trends in posting online content in the Russo-Ukrainian War. Table 1 below briefly outlines the typical social media responses identified after a Russian victory, Ukrainian victory, and the one-year anniversary of the invasion.

Table 1

| Ukrainian Sources | Russian Sources | Wagner Sources | |

| Russian Victory (Battle of Bakhmut, around 20th May 2023) | Ignored reports of Russian victory, sought to legitimize soldiers and capability. | Celebrated victory in Bakhmut, praised Wagner and Yevgeny Prigozhin. | Celebrated victory and praised Prigozhin, utilized memes and humor. |

| Ukrainian Victory (Kerch Bridge explosion, 8th October 2022) | Celebrated explosion, posted memes and boasted heavily. | Deflected to other events in the war, seldom mentioned bridge. | Posted recruitment advertisements and made loose references to the explosion. |

| One-Year Anniversary of Invasion (24th February 2023) | Posted images of dead Russian soldiers, reposted Zelenskyy speech, and posted drone bombing footage. | Made solemn commemoration of the war, defended its legitimacy. | Posted dead Ukrainian soldiers and combat footage. |

Rhetorical Goals and Devices

In response to the first research question regarding the specific methods and rhetorical devices utilized on social media by Russian, Ukrainian, and Wagner forces, this study found that the most common themes between all sides were legitimization, deflection, humor, and violent content.

Legitimization

Figure 1. Post from Ukrainian Telegram channel ā3-ŃŃ Š¾ŠŗŃŠµŠ¼Š° ŃŃŃŃŠ¼Š¾Š²Š° Š±ŃŠøŠ³Š°Š“Š°.ā Text translates as: āWork at the headquarters on the front line near Bakhmut. Planning offensive actions of the 1st Assault Battalion.ā

Images depict Ukrainian soldiers in a bunker surrounded by maps, papers, and computer screens.

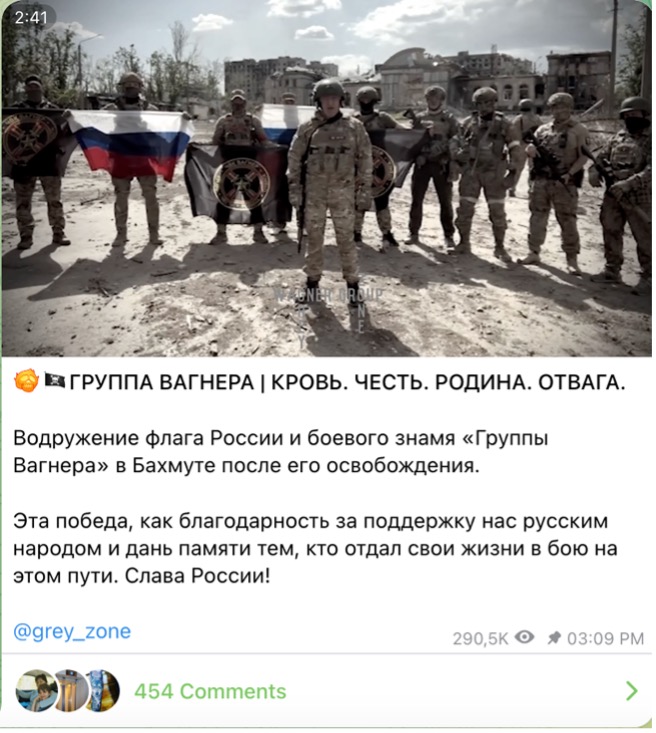

Figure 2. Post from Wagner Telegram channel āThe Grey Zone.ā Text translates as: āWAGNER GROUP | BLOOD. HONOR. HOMELAND. COURAGE. Raising the Russian flag and the battle banner of the Wagner Group in Bakhmut after its liberation. This victory is like gratitude for the support of the Russian people and a tribute to the memory of those who gave their lives in battle along this path. Glory to Russia!

Video depicts Yevgeny Prigozhin announcing the capture of Bakhmut.

In this usage, legitimization refers to a broad category of posts that are designed to bolster support for a particular force by flexing its military might, emphasizing a nationās values, or utilizing examples of civilian support. One specific example of this comes from Ukrainian sources following the fall of Bakhmut. Bakhmut is an important strategic city located north of Donetsk in the Donetsk Oblast of occupied Ukraine. On May 20, 2023, Russian and Wagner forces captured the city after more than 200 days of street-to-street and building-to-building combat (Kullab & Litvinova, 2023). After the city fell, the Third Separate Assault Brigade of Ukraine posted a series of high-quality photos displaying soldiers hunched over maps and documents along with the caption āWork at the headquarters on the front line near Bakhmut. Planning offensive actions of the 1st Assault Battalionā (Figure 1). This post seeks to legitimize the war and battle by showing the front lines hard at work.

Russian forces also sought to use tactics of legitimization in the context of defeat. On October 8, 2022, Ukrainian forces utilized a remote weapons delivery system to partially destroy the Kerch Bridge, a Russian structure connecting Crimea to mainland Russia. This bridge is an important symbol for Russian nationalists because it symbolizes a tangible connection between Russia and Crimea, which was annexed by Russia in 2014. The destruction of the Kerch Bridge was hailed as both a symbolic victory and a significant infrastructural blow by Ukrainian forces. In the aftermath of the explosion, the pro-Russian Telegram channel Razved_Dozor posted a blurry video allegedly showing a Ukrainian civilian tearing down a Ukrainian flag in the city of Marhanets with the caption āIn the city of Marganets (Dnepropetrovsk region), a citizen of Ukraine decided that the yellow-blue flag had an adverse effect on the residents of the city.ā Instead of directly addressing the Kerch Bridge explosion, the Russian source immediately sought to legitimize Russian goals and delegitimize Ukrainian morale.

In the aftermath of the Battle of Bakhmut, Russian and Wagner sources relied heavily on legitimization to justify the long and casualty-heavy campaign. While the long-fought siege resulted in a Russian victory, it came at a significant cost in terms of funds, equipment, and manpower. One video that circulated through nearly every pro-Russian and pro-Wagner source was a video from May 20, 2023, showing Wagner leader Yevgeny Prigozhin announcing the capture of the city (Figure 2). In the video, Prigozhin salutes his fallen comrades and touts Russian propaganda while emphasizing the supremacy of Wagnerās capabilities. The Russian media mill generally lauded Wagner after the victory at Bakhmut, with the pro-Russian WarGonzo posting a video of Wagner soldiers posing with the Wagner flag flying over Bakhmut on May 20. This feverous embracement of Wagner after Bakhmut by Russian media sources may have ultimately been the final motivation for Prigozhin to launch his ill-fated ārebellionā against the Russian government just a month later.

The use of informational and accurate posts as a form of legitimization typically occurred only when the facts of a scenario served to benefit the force posting the content. One way that this phenomenon is manifested is in the form of posts from official government channels. After the Kerch Bridge explosion, the Ukrainian Deputy of the VRU Committee on National Security, Defense, and Intelligence, Yuriy Mysyagin, posted āanother beautiful videoā of the heavily damaged Kerch Bridge on his personal Telegram account. After the one-year anniversary of the invasion, a Telegram channel affiliated with the Ukrainian ZSU posted a video of Ukrainian president Volodymyr Zelenskyy speaking to a G7 conference. These posts use Ukrainian public officials to legitimize the war effort, especially in the context of a perceived victory, such as at the Kerch Bridge.

A similarly official appeal to legitimization can be found in a Wagner recruitment post uploaded immediately following the Kerch Bridge explosion. Many Russian nationalists expressed displeasure that Russian security would allow such an attack, and Wagner sought to distance itself from the Russian army by emphasizing the forceās good pay and āthe best special effects at concertsā for anyone who would join, even those who āhave a criminal record or are on the blacklist.ā This post is intriguing because it uses a Russian defeat to legitimize Wagner as a superior fighting force, rather than an allied force.

The final examples of legitimization on social media come in the form of long, text-based appeals to nationalist sentiment. This was seen prevalently in Russian channels following the one-year anniversary of the āspecial operation,ā as the war raged on long past its intended end date. The Russian Razved_Dozor Telegram channel posted a letter emphasizing that āeverything that happened in a year had to happen,ā and that āWe are our own people, Russia is our own civilization.ā This post relies on emotional appeal and patriotic sentiment to spur legitimization, in a format that would be familiar to propagandists of old.

Deflection

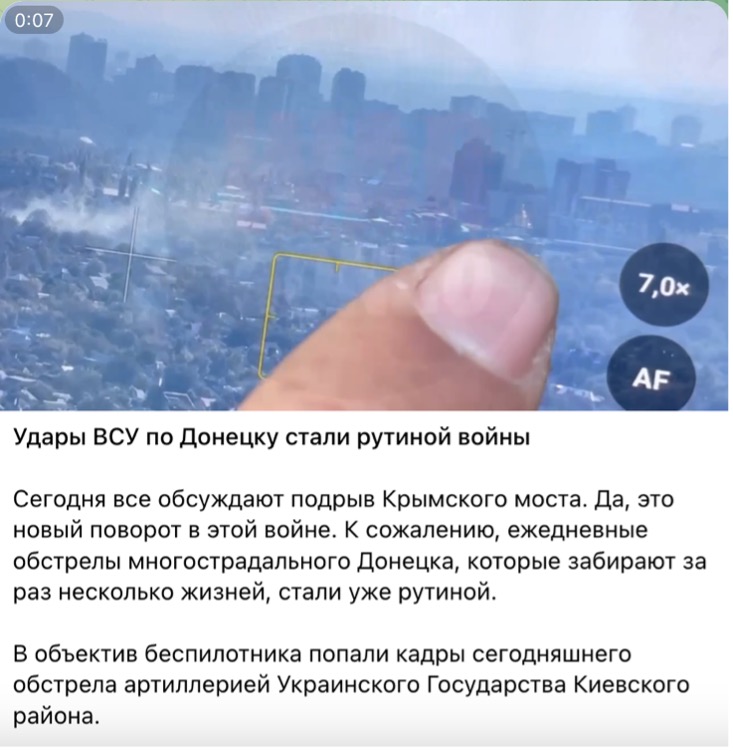

Figure 3. Post from Russian Telegram channel WarGonzo. Text translates as: āToday everyone is discussing the blowing up of the Crimean Bridge. Yes, this is a new twist in this war. Unfortunately, daily shelling of long-suffering Donetsk, which takes several lives at a time, has already become routine.ā

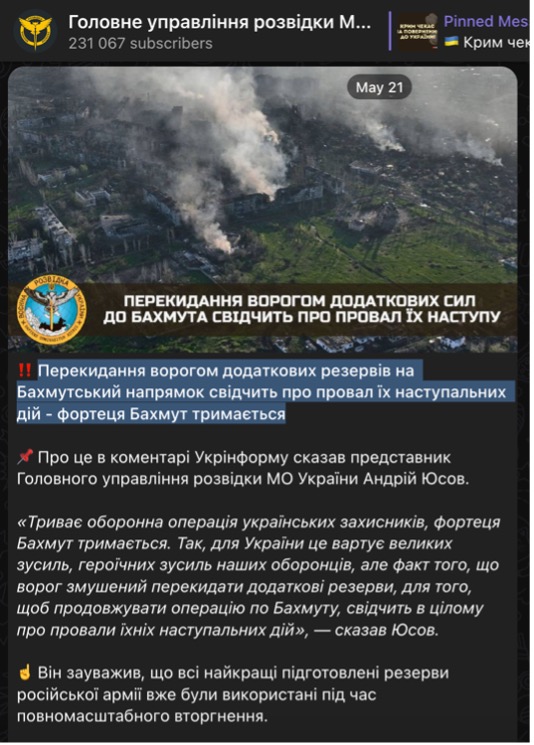

Figure 4. Post from Ukrainian Telegram channel āŠŠ¾Š»Š¾Š²Š½Šµ ŃŠæŃŠ°Š²Š»ŃŠ½Š½Ń ŃŠ¾Š·Š²ŃŠ“ŠŗŠø ŠŠ Š£ŠŗŃŠ°ŃŠ½Šøā. Text translates as: āThe enemy’s transfer of additional reserves to the Bakhmut direction indicates the failure of their offensive actions – the Bakhmut fortress is holding.ā

All three sources examined in this study engaged in the practice of deflection or misdirection. In this context, deflection concerns a source creating content that seeks to ignore negative results and emphasize some other event or talking point to downplay the significance of a perceived loss. In response to the Kerch Bridge explosion, Russian sources sought to deflect attention toward other issues. In a post from October 8 (Figure 3), the pro-Russian war correspondent channel known as WarGonzo posted a video showing a smoldering building with the caption āToday everyone is discussing the blowing up of the Crimean Bridge. Yes, this is a new twist in this war. Unfortunately, daily shelling of long-suffering Donetsk, which takes several lives at a time, has already become routine.ā This post brushes over the attack on the bridge and emphasizes alleged war crimes occurring elsewhere in Russian-occupied Ukraine, therefore deflecting attention away from the explosion and minimizing its significance to the pro-Russian audience. Sources affiliated with the Wagner group also engaged in deflection in the wake of the Kerch Bridge explosion. On the day of the explosion, Wagner-affiliated Telegram channel The Grey Zone posted a long paragraph describing organizational changes that were occurring in the upper echelons of the Russian military structure. The post briefly mentions the explosion, but does not provide any detail in discussing the event.

Ukrainian sources also engaged in deflection. In a post from May 21, 2023, a Telegram channel belonging to the Main Directorate of Intelligence of the Ministry of Defense of Ukraine posted an image of burning buildings with the caption āThe enemy’s transfer of additional reserves to the Bakhmut direction indicates the failure of their offensive actions – the Bakhmut fortress is holdingā (Figure 4). This post came after the fall of Bakhmut was reported by the Associated Press and by Russia itself. However, despite this discrepancy, the Ukrainian source remained committed to emphasizing its allegedly strong hold on the city and the region. This post takes deflection to an extreme level.

Humor

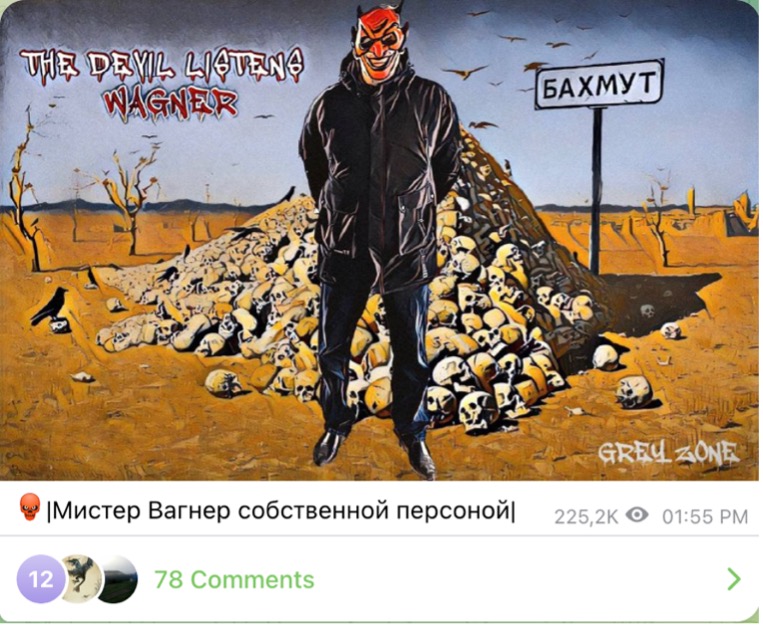

Figure 5. Post from Wagner Telegram channel āThe Grey Zone.ā Caption translates as āMr. Wagner Himself.ā Image depicts satanic figure standing in front of a pile of skulls next to a sign reading āBakhmut.ā

Figure 6. Post from Ukrainian Telegram channel āŠŠæŠµŃŠ°ŃŠøŠ²Š½ŠøŠ¹ ŠŠ”Š£.ā No caption. Video depicts āBongo Manā meme with burning Kerch Bridge in background.

BiaÅy (2017) writes that āVisual content has two major functions ā to impress or to dismay. It rarely has a purely informative character. It is also interesting to note the significant role played in psychological warfare by humoristic drawings and pictures.ā (p. 85). Memes and humorous images or videos have long been a massive part of internet culture, so it should not be a surprise that civilians have taken to propagating memes to cope with the toll of the war. However, Russian and Ukrainian forces have also taken to posting such content. Due to the popularity of memes, integrating humorous images with traditional forms of propaganda has been a relatively fluid process for social media propagandists. Interestingly, official Russian channels do not seem as interested in posting humorous content as their Ukrainian and Wagner counterparts, but this requires more investigation.

The first category of humorous content found online represents images that are akin to traditional political cartoons. One example of this can be seen in a Wagner post following the fall of Bakhmut, where the victorious āMr. Wagner himselfā is portrayed as a horned devil standing over a pile of Ukrainian skulls (Figure 5). These posts are less about humor and more about using cartoons and hyperbolic imagery to transmit a message, in this case with the devil representing the lethal capability of Wagner mercenaries. The second category of humorous content, as alluded to earlier, takes the form of internet memes. Often, memes relating to the war attempt to capitalize on the meme trends of the current day. One example of this can be seen in the Ukrainian response to the Kerch Bridge explosion, when the official ZSU Telegram page posted a rendition of the briefly popular ābongo manā meme with the smoldering bridge in the background of the video (Figure 6).

Figure 7. Post from Ukrainian Telegram channel āŠ”ŠŠŠŠ Š UAā with caption translating āWagnerites near Bakhmut.ā Image shows rows of dead men.

Figure 8. Post from Wagner Telegram channel āThe Grey Zone.ā Image shows armed Wagner soldiers standing over the bodies of Ukrainian soldiers near Berkhovka.

One recent phenomenon is the act of posting violent content online. This trend was pioneered by fighters of the Islamic State, who gained notoriety for posting videos of beheadings and bombings on their media feeds, striking fear into the hearts of civilians and opposing soldiers the world over. Official channels tend to shy away from posting content directly showing death, but in a war with so many third-party content aggregators and semi-detached units, large amounts of violent content have emerged online and become some of the most popular videos of the war, likely due to their shock value and prevalence as propaganda tools. Every side engages in posting violent content, but this study found that Wagner and individual Ukrainian unit channels were much more likely to do so.

Around the one-year anniversary of the invasion, a Telegram channel branding itself as the āAx of the UA (Ukrainian Army)ā posted an image of dozens of partially dressed dead soldiers with the caption āWagnerites near Bakhmutā (Figure 7). At the same time, Wagner channel The Grey Zone posted two images of dead Ukrainian soldiers near Berkhovka and a video of a skirmish near Vugledar, both located along the front lines in the occupied Donetsk Oblast where most of the fighting has occurred for the duration of the war (Figure 8). The motivation for posting these videos appears to be very similar to the motivation for posting legitimizing content; that being a desire to emphasize one forceās military might while portraying the opposing side as weak or ineffectual.

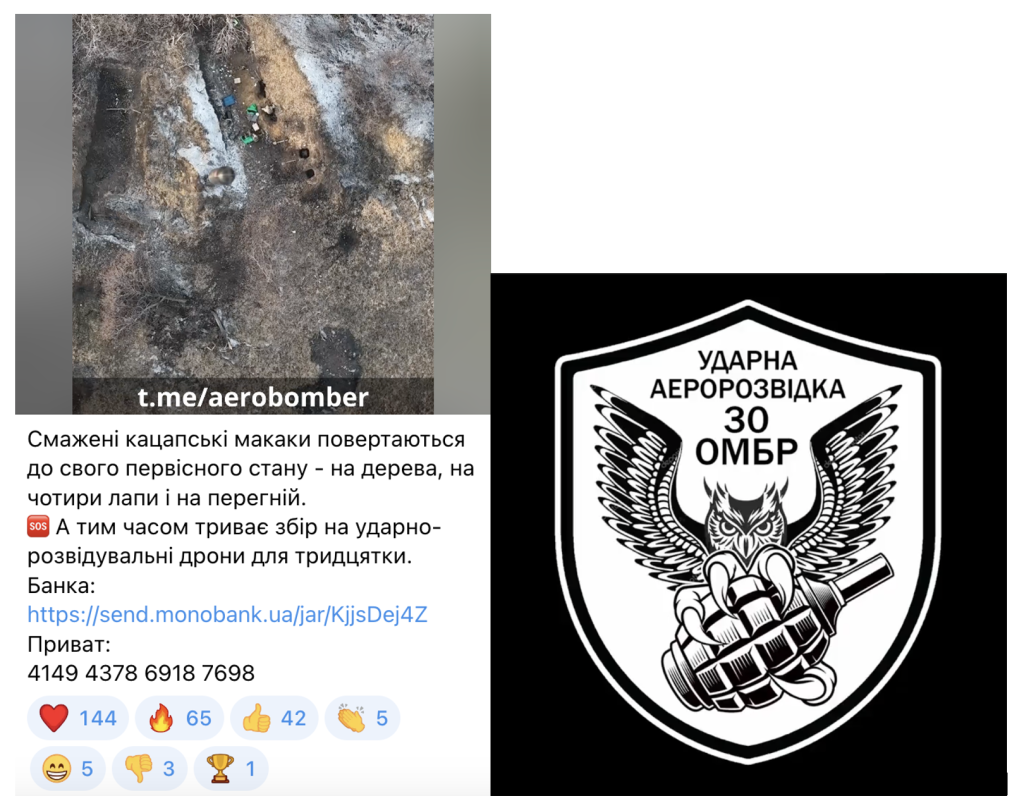

Figures 9 and 10. Post from Ukrainian Telegram channel āAerobomber,ā allegedly tied to the Strike Aerial Reconnaissance 30th OMBR unit. The image on the left shows an image from a droneās video camera as a grenade falls on a group of Russian soldiers. The image on the right is the unitās self-designed insignia, depicting an owl clutching a grenade in its talons.

During the war, modified civilian drones have been used, primarily by Ukrainian forces, to deliver explosive payloads, usually in the form of a grenade or small bomb. Because many of these drones come with cameras, recordings of these deadly attacks are some of the most common and intimidating videos on the internet. The message from Ukrainian posters is clear: death can come from above at any time, and Russian soldiers probably wonāt hear it until it is too late. The format of these videos is almost universal: a heavily watermarked video with hardbass-style music blaring in the background shows a droneās point of view as it hovers above a group of soldiers before ultimately dropping the payload, leading to an explosion and the often slow, painful deaths of the enemy. Entire units of the Ukrainian Army, such as the Strike Aerial Reconnaissance 30th OMBR, are dedicated to drone warfare, and have become prolific in their posting habits (Figures 9 and 10). The logo for the 30th OMBR is an Owl holding a grenade in its talons, with the pin already pulled.

Similarities and Differences Between Sides

The second research question that this study sought to analyze was the similarities and differences among Russian, Ukrainian, and Wagner posting patterns. One commonality between official Russian and Ukrainian channels was their reliance on content aimed at legitimizing the war effort. These videos and images are perhaps the most palatable and āappropriateā form of social media propaganda. The content is largely aimed at soliciting support, and therefore maintains an authoritative tone. All three sides similarly engaged in deflective tactics to maintain a morally superior tone while ignoring or minimizing losses and negative facts. Ukrainian- and Wagner-affiliated sources were more likely to engage in posting violent content and drone bombing videos than their Russian counterparts, but individual pro-Russian content aggregators were willing to do so. Finally, meme content was spread throughout all three sources, but Ukrainian- and Wagner-affiliated sources were again more willing to participate in this trend. Ultimately, it appears that Ukrainian- and Wagner-affiliated channels were more likely to post edgier and more violent content, while Russian sources attempted to maintain a balanced and authoritative tone.

Potential Benefits

Regarding the third research question, the perceived benefits of these different types of content varies. The case for legitimization appears to be straightforward. By emphasizing oneās strengths and the enemyās weaknesses, propagandists seek to win hearts and minds for the cause. The invasion of Ukraine has resulted in mass conscription in both Ukraine and Russia, and as a result the popularity of the war has been in flux for both sides. According to a Gallup poll from October 2023, the popularity of the war has decreased among Ukrainians from 70% to 60% in the past year (Vigers 2023). The popularity of the war among Russian civilians fell by a very similar 9% in almost the same period (Pribylov 2023). In the examples reviewed by this study, legitimization ranged from images displaying military might to long letters conveying patriotic passion. One video allegedly depicting a Ukrainian civilian tearing down a Ukrainian flag displays the heart of the issue ā Russian and Ukrainian propagandists believe that legitimization helps bolster civilian morale and to give credence to their actions.

Deflection serves as the opposite of legitimization in its purpose. By deflecting attention away from losses to other issues, militaries seek to maintain their image of superiority and strength through silence. This can come in the form of either briefly acknowledging or flatly ignoring a negative fact, which is consistent with propagandist techniques from the past. Ukraine and Russia are far from the only nations to engage in this activity during war or peace, but it has become an enshrined tactic in the current war.

Humorous videos and images, along with internet memes, may serve to raise the spirits of the civilians and soldiers consuming content. Morale is a highly important resource in warfare, and the depletion of morale is tantamount to defeat itself in many scenarios. Therefore, the usage of memes as online propaganda can be interpreted as an attempt to win a war on the mental front. Furthermore, memes spread quickly across the internet due to their replicability and popularity, so integrating propaganda into memes is a good way of ensuring that a message spreads to a desired audience. This tactic has been used heavily by political campaigns in America to increase awareness, and it is likely that memes play a similar role in the Russo-Ukrainian War.

Violent content and drone bombing videos are a relatively new phenomenon in hybrid warfare. The initial impulse is to label these videos as an attempt to shock and demoralize the enemy through violent and jarring videos of their countrymenās deaths. However, the presence of quick techno music and a flashy editing style implies that these videos may serve the primary purpose of energizing and exciting Ukrainian civilians and soldiers. More research is necessary to determine the exact motivation for this style of content, but its prevalence is certainly significant and noteworthy.

V. Discussion

Perhaps the most interesting aspect of the prevalence of social media propaganda in the Russo-Ukrainian War is how it reaches and interacts with Western audiences. Online communities, such as Redditās r/NonCredibleDefense or r/CombatFootage, are dedicated to posting and compiling large quantities of footage and memes from all sides of the war, often with shaded intent. Accounts such as Instagramās atlas.news3 or ourwarstoday2 appear to be genuinely journalistic in their efforts, and post content from a wide range of topics including the Russo-Ukrainian War. However, individual accounts on Reddit posting to r/NonCredibleDefense may be posting content simply because it is shocking and will generate large amounts of discussion and āupvotes.ā The most viewed and upvoted content on combat-focused subreddits is consistently content that shows close-quarters combat, impressive aerial duels, or simply the biggest explosions. Western, English-speaking audiences on these platforms appear to be overwhelmingly pro-Ukraine, but not universally so. Many commenters appear to be there simply for the spectacle, but in an age where irony and apathy are dominant, it is difficult to obtain a genuine perspective on these consumers based on their online comments and interactions.

The ultimate question of the hybrid warfare effort from both sides is whether their efforts are successful in turning the hearts and minds of domestic and foreign civilians and soldiers. This study has documented the rhetorical goals and devices employed by Russian, Ukrainian, and Wagner forces on social media, but the actual impact of these posts is unclear. Early in the war, reports surfaced of Russian soldiers surrendering en masse to Ukrainian soldiers and civilians alike, allegedly influenced by part in seeing their countrymen die on social media. However, today these reports are absent from major media cycles in the west. With no end to the war in sight, the continued efforts of social media propagandists increasingly resemble the trench warfare that the conventional soldiers of Russia and Ukraine are mired down in. Finally, this research was limited by several factors that warrant evaluation in future research. The form factor of this research inherently limited its sample size, the scope of the research questions was ambitious, and the findings of the research were widely reliant upon the services of Google Translate as opposed to a more robust translating approach.

VI. Conclusion

The ongoing phase of the Russo-Ukrainian War has led to an escalation in the amount and intensity of social media posting from channels associated with Russian, Ukrainian, and Wagner forces. This study examined the rhetorical goals and devices employed by these channels and found that the four primary goals of social media content were to legitimize, deflect, humorize, or convey violent content. This research further found that the posting patterns between all sides of the social media battle were relatively consistent across sides, but that Russian sources often deferred to post as much violent content as their Ukrainian or Wagner-associated counterparts. Finally, this research offered qualitative interpretations of the perceived benefits of each type of rhetorical goal, all ultimately attempting to either bolster or dishearten the morale of civilians and soldiers.

This research has only skimmed the surface concerning rhetorical goals and devices in hybrid warfare. The sheer amount of content not examined by this study demonstrates the need for more research focused on better categorizing social media posts. Additionally, research must be conducted to ascertain the internal motivations for such posting. However, without internal documents or whistleblowers, this data will be difficult to obtain. Similarly, more research must be done to determine the ways in which social media propagandists selectively target either pro-Russian or pro-Ukrainian consumers.

Acknowledgements

I want to thank Dr. Daniel Haygood, whose mentorship and guidance was instrumental to the completion of this project. I also want to thank Dr. Michael Carignan for nurturing my interest in research and higher learning. Finally, I thank my friends and family for their unwavering support throughout the process of writing and presenting this research.

References

3-ŃŃ Š¾ŠŗŃŠµŠ¼Š° ŃŃŃŃŠ¼Š¾Š²Š° Š±ŃŠøŠ³Š°Š“Š° [@ab3army]. (2023, May 25). Š Š¾Š±Š¾ŃŠ° Ń ŃŃŠ°Š±Ń Š½Š° ŠæŠµŃŠµŠ“Š¾Š²ŃŠ¹ ŠæŃŠ“ ŠŠ°Ń Š¼ŃŃŠ¾Š¼. ŠŠ»Š°Š½ŃŠ²Š°Š½Š½Ń Š½Š°ŃŃŃŠæŠ°Š»ŃŠ½ŠøŃ Š“ŃŠ¹ 1-Š³Š¾ ŃŃŃŃŠ¼Š¾Š²Š¾Š³Š¾ Š±Š°ŃŠ°Š»ŃŠ¹Š¾Š½Ń. [Images]. Telegram.

BiaÅy, B. (2017). Social mediaāFrom social exchange to battlefield. The Cyber Defense Review, 2(2), 69ā90.

Brown, J. (2018). An alternative war: The development, impact, and legality of hybrid warfare conducted by the nation state. Journal of Global Faultlines, 5(1ā2), 58ā82.

Š”ŠŠŠŠ Š UA. (2023, February 22). ŠŠ°Š³Š½ŠµŃŠ¾Š²ŃŃ ŠæŠ¾Š“ ŠŠ°Ń Š¼ŃŃŠ¾Š¼. [Image]. Telegram.

Danyk, Y., & Briggs, C. M. (2023). Modern cognitive operations and hybrid warfare. Journal of Strategic Security, 16(1), 35ā50.

Feezell, J. T. (2018). Agenda Setting through social media: The importance of incidental news exposure and social filtering in the digital era. Political Research Quarterly, 71(2), 482ā494.

ŠŠ¾Š»Š¾Š²Š½Šµ ŃŠæŃŠ°Š²Š»ŃŠ½Š½Ń ŃŠ¾Š·Š²ŃŠ“ŠŗŠø ŠŠ Š£ŠŗŃŠ°ŃŠ½Šø [@DIUkraine]. (2023, 21 May). ŠŠµŃŠµŠŗŠøŠ“Š°Š½Š½Ń Š²Š¾ŃŠ¾Š³Š¾Š¼ Š“Š¾Š“Š°ŃŠŗŠ¾Š²ŠøŃ ŃŠµŠ·ŠµŃŠ²ŃŠ² Š½Š° ŠŠ°Ń Š¼ŃŃŃŃŠŗŠøŠ¹ Š½Š°ŠæŃŃŠ¼Š¾Šŗ ŃŠ²ŃŠ“ŃŠøŃŃ ŠæŃŠ¾ ŠæŃŠ¾Š²Š°Š» ŃŃ Š½Š°ŃŃŃŠæŠ°Š»ŃŠ½ŠøŃ Š“ŃŠ¹ – ŃŠ¾ŃŃŠµŃŃ ŠŠ°Ń Š¼ŃŃ ŃŃŠøŠ¼Š°ŃŃŃŃŃ ŠŃŠ¾ ŃŠµ Š² ŠŗŠ¾Š¼ŠµŠ½ŃŠ°ŃŃ. [Image]. Telegram.

Grey Zone [@grey_zone]. (2022, October 8). Š Ā«ŠŃŃŠæŠæŠµ ŠŠ°Š³Š½ŠµŃŠ°Ā» ŃŠ½Š¾Š²Š° ŃŠ¼Š¾ŃŃ Š½Š¾Š²Š¾ŃŠ²Š»ŠµŠ½Š½ŃŃ Š¼ŃŠ·ŃŠŗŠ°Š½ŃŠ¾Š² Š“Š»Ń Š³Š°ŃŃŃŠ¾Š»ŠµŠ¹ Š·Š° ŃŃŠ±ŠµŠ¶Š¾Š¼! ā¢ Š“Š¾ŃŃŠ¾Š¹Š½Š¾Šµ ŃŠøŠ½Š°Š½ŃŠ¾Š²Š¾Šµ Š²Š¾Š·Š½Š°Š³ŃŠ°Š¶Š“ŠµŠ½ŠøŠµ (ŃŠŗŠ¾Š»ŃŠŗŠ¾ Šø Š¾Š±ŠµŃŠ°Š½Š¾, Š² Š¾ŃŠ»ŠøŃŠøŠø Š¾Ń. [Image]. Telegram.

Grey Zone [@grey_zone]. (2022, October 8). Š ŃŠ²ŃŠ·Šø Ń Š¾ŃŃŃŠµŃŃŠ²Š»ŃŠ½Š½Š¾Š¹ Š°ŃŠ°ŠŗŠ¾Š¹ Š£ŠŗŃŠ°ŠøŠ½Ń Š½Š° Ā«ŠŃŃŠ¼ŃŠŗŠøŠ¹ Š¼Š¾ŃŃĀ», ŃŠ¼ŠµŃŃŠøŠ»ŠøŃŃ ŠæŠ»Š°Š½ŠøŃŃŠµŠ¼ŃŠµ Šø ŃŠ¶Šµ Š½ŠµŃŠŗŠ¾Š»ŃŠŗŠ¾ Š½ŠµŠ“ŠµŠ»Ń ŠæŃŠ¾Š²Š¾Š“ŠøŠ¼ŃŠµ ŠæŠµŃŠµŃŃŠ°Š½Š¾Š²ŠŗŠø Š² ŠŠøŠ½ŠøŃŃŠµŃŃŃŠ²Šµ Š¾Š±Š¾ŃŠ¾Š½Ń. [Text]. Telegram.

Grey Zone [@grey_zone]. (2023, February 24). ŠŠ¾Š“ŃŠ²ŠµŃŠ¶Š“Š°ŃŃŠøŠµ ŠŗŠ°Š“ŃŃ ŠøŠ· ŠŠµŃŃ Š¾Š²ŠŗŠø, ŠŗŠ¾ŃŠ¾ŃŠ°Ń ŃŠµŠ³Š¾Š“Š½Ń ŃŃŃŠ¾Š¼ ŠæŠµŃŠµŃŠ»Š° ŠæŠ¾Š“ ŠŗŠ¾Š½ŃŃŠ¾Š»Ń Š§ŠŠ Ā«ŠŠ°Š³Š½ŠµŃĀ» Š ŃŠµŠ·ŃŠ»ŃŃŠ°ŃŠµ ŃŃŠ¶ŠµŠ»ŃŃ Š±Š¾ŠµŠ² ŠæŃŠ¾ŃŠøŠ²Š½ŠøŠŗ Š½Šµ Š²ŃŠ“ŠµŃŠ¶Š°Š» Š½Š°ŃŠøŃŠŗŠ°. [Image]. Telgram.

Grey Zone [@grey_zone]. (2023, February 24). ŠŠ°Š“ŃŃ ŠøŠ· ŠæŠ¾Š“ Š£Š³Š»ŠµŠ“Š°ŃŠ°, Š³Š“Šµ Š±Š¾Š¹ŃŃ Š±ŃŠøŠ³Š°Š“Ń Š¼Š¾ŃŃŠŗŠ¾Š¹ ŠæŠµŃ Š¾ŃŃ Š¢ŠøŃ Š¾Š¾ŠŗŠµŠ°Š½ŃŠŗŠ¾Š³Š¾ ŃŠ»Š¾ŃŠ° Š Š¾ŃŃŠøŠø ŃŃŃŃŠ¼Š¾Š²Š°Š»Šø ŃŠŗŃŠµŠæŃŠ°Š¹Š¾Š½ ŠæŃŠ¾ŃŠøŠ²Š½ŠøŠŗŠ°. Š Š¾Š“Š½Š¾Š¼ ŠøŠ· ŃŠæŠøŠ·Š¾Š“Š¾Š² Š±Š¾Ń. [Video]. Telegram.

Grey Zone [@grey_zone]. (2023, May 20). |ŠŠøŃŃŠµŃ ŠŠ°Š³Š½ŠµŃ ŃŠ¾Š±ŃŃŠ²ŠµŠ½Š½Š¾Š¹ ŠæŠµŃŃŠ¾Š½Š¾Š¹|. [Image]. Telegram.

Grey Zone [@grey_zone]. (2023, May 20). ŠŠ Š£ŠŠŠ ŠŠŠŠŠŠ Š | ŠŠ ŠŠŠ¬. Š§ŠŠ”Š¢Š¬. Š ŠŠŠŠŠ. ŠŠ¢ŠŠŠŠ. ŠŠ¾Š“ŃŃŠ¶ŠµŠ½ŠøŠµ ŃŠ»Š°Š³Š° Š Š¾ŃŃŠøŠø Šø Š±Š¾ŠµŠ²Š¾Š³Š¾ Š·Š½Š°Š¼Ń Ā«ŠŃŃŠæŠæŃ ŠŠ°Š³Š½ŠµŃŠ°Ā» Š² ŠŠ°Ń Š¼ŃŃŠµ ŠæŠ¾ŃŠ»Šµ ŠµŠ³Š¾ Š¾ŃŠ²Š¾Š±Š¾Š¶Š“ŠµŠ½ŠøŃ. ŠŃŠ°. [Video]. Telegram.

Hanlon, B. (2018). Itās not just Facebook: Countering Russiaās social media offensive. German Marshall Fund of the United States. Retrieved from

Kullab, S., & Litvinova, D. (2023, May 23). Russia TV celebrates as it reports the capture of Bakhmut, comparing it to Berlin in 1945. AP News.

Lin, H. (2022). Russian cyber operations in the invasion of Ukraine. The Cyber Defense Review, 7(4), 31ā46.

Mullaney, S. (2022). Everything flows: Russian information warfare forms and tactics in Ukraine and the US between 2014 and 2020. The Cyber Defense Review, 7(4), 193ā212.

ŠŠŠ”ŠÆŠŠŠ [@mysiagin]. (2022, October 8). ŠŃŠ¾ŠæŠ°Š³Š°Š½Š“ŠøŃŃŠø ŠæŠ¾ŠŗŠ°Š·Š°Š»Šø ŃŠµ ŠæŃŠµŠŗŃŠ°ŃŠ½Šµ Š²ŃŠ“ŠµŠ¾ ŃŠ¾Š³Š¾, ŃŠŗ Š²ŠøŠ³Š»ŃŠ“Š°Ń ŠŃŠøŠ¼ŃŃŠŗŠøŠ¹ Š¼ŃŃŃ. ŠŠ° Š¶Š°Š»Ń, Š¾Š“Š½Š° ŃŠ¼ŃŠ³Š° Š²ŃŃŠ»ŃŠ»Š° ā Š°Š»Šµ ŠæŃŠ¾ŠæŃŃŠŗŠ°ŃŃŃ Š»ŠøŃŠµ Š»ŠµŠ³ŠŗŠ¾Š²Ń Š°Š²ŃŠ¾Š¼Š¾Š±ŃŠ»Ń. [Videos]. Telegram.

ŠŠæŠµŃŠ°ŃŠøŠ²Š½ŠøŠ¹ ŠŠ”Š£ [@operativno]. (2022, October 8). [Video]. Telegram.

ŠŠæŠµŃŠ°ŃŠøŠ²Š½ŠøŠ¹ ŠŠ”Š£ [@operativnoZSU]. (2023, February 24). ŠŠ¾Š»Š¾Š“ŠøŠ¼ŠøŃ ŠŠµŠ»ŠµŠ½ŃŃŠŗŠøŠ¹: ŠŠ·ŃŠ² ŃŃŠ°ŃŃŃ Ń Š·ŃŃŃŃŃŃŃ Š»ŃŠ“ŠµŃŃŠ² Ā«ŠŃŃŠæŠø ŃŠµŠ¼ŠøĀ». ŠŠ°ŃŠ° Š·ŃŃŃŃŃŃ Š¼Š°Š»Š° Š“Š²Ń ŃŠ°ŃŃŠøŠ½Šø. Š£ ŠæŠµŃŃŃŠ¹, ŠæŃŠ±Š»ŃŃŠ½ŃŠ¹ ŃŠ°ŃŃŠøŠ½Ń ŠæŠ¾Š“ŃŠŗŃŠ²Š°Š² ŠæŠ°ŃŃŠ½ŠµŃŠ°Š¼. [Video]. Telegram.

Pribylov, S. (2023, September 6). Russiaās shifting public opinion on the war in Ukraine. Voice of America. Retrieved from

Prier, J. (2017). Commanding the trend: Social media as information warfare. Strategic Studies Quarterly, 11(4), 50ā85.

Ratsiborynska, V. (2016). When hybrid warfare supports ideology: Russia Today. NATO Defense College. Retrieved from

Razved Dozor [@Razved_Dozor]. (2022, October 8). Š Š³. ŠŠ°ŃŠ³Š°Š½ŠµŃ (ŠŠ½ŠµŠæŃŠ¾ŠæŠµŃŃŠ¾Š²ŃŠŗŠ°Ń Š¾Š±Š».) Š³ŃŠ°Š¶Š“Š°Š½ŠøŠ½ Š£ŠŗŃŠ°ŠøŠ½Ń ŃŠµŃŠøŠ», ŃŃŠ¾ Š¶ŠµŠ»ŃŠ¾-ŃŠøŠ½ŠøŠ¹ ŃŠ»Š°Š³ Š½ŠµŠ±Š»Š°Š³Š¾ŠæŃŠøŃŃŠ½Š¾ Š²Š»ŠøŃŠµŃ Š½Š° Š¶ŠøŃŠµŠ»ŠµŠ¹ Š³Š¾ŃŠ¾Š“Š°. [Video]. Telegram.

Razved Dozor [@Razved_Dozor]. (2023, February 24). ŠŃŃŠ·ŃŃ ŠŠ¾Š¶Š°Š»ŃŠ¹, Š¼Š½Š¾Š³ŠøŠµ, ŠŗŠ°Šŗ Šø Ń, ŃŠ¼Š¾ŃŃŠµŠ»Šø Š¾Š±ŃŠ°ŃŠµŠ½ŠøŠµ ŠæŃŠµŠ·ŠøŠ“ŠµŠ½ŃŠ°. ŠŠ°Š“ŃŠ¼Š°Š»Š°ŃŃ. ŠŃŠµ, ŃŃŠ¾ ŠæŃŠ¾ŠøŠ·Š¾ŃŠ»Š¾ Š·Š° Š³Š¾Š“ Š“Š¾Š»Š¶Š½Š¾ Š±ŃŠ»Š¾ ŠæŃŠ¾ŠøŠ·Š¾Š¹ŃŠø. Š Š¾ŃŃŠøŃ Š“Š¾Š»Š¶Š½Š° [Image]. Telegram.

Razved Dozor [@Razved_Dozor]. (2023, May 20). ŠŠ°Ń Š¼ŃŃ (ŠŃŃŃŠ¼Š¾Š²ŃŠŗ) Š²Š·ŃŃ! 20.05.2023 ŃŠµŠ³Š¾Š“Š½Ń ŠæŠ¾ ŠæŠ¾Š»ŃŠ“Š½Ń Š±ŃŠ» ŠæŠ¾Š»Š½Š¾ŃŃŃŃ Š²Š·ŃŃ ŠŠ°Ń Š¼ŃŃ ŠŠ°ŃŠ²Š»ŠµŠ½ŠøŠµ ŠŃŠøŠ³Š¾Š¶ŠøŠ½Š° Š¾ Š²Š·ŃŃŠøŠø Š½Š°ŃŠµŠ»ŠµŠ½Š½Š¾Š³Š¾ ŠæŃŠ½ŠŗŃŠ° ŠŠ°Ń Š¼ŃŃ ŃŠøŠ»Š°Š¼Šø Š§ŠŠ Ā«ŠŠ°Š³Š½ŠµŃĀ». [Video]. Telegram.

Rosenberry, J., & Vicker, L. A. (2021). Applied mass communication theory (3rd ed.). Taylor & Francis.

Ross, R. J., & Rutland, J. (2022). A military of influencers: The U.S. Army social media, and winning narrative conflicts. The Cyber Defense Review, 7(4), 213ā226.

Š£Š“Š°ŃŠ½Š° Š°ŠµŃŠ¾ŃŠ¾Š·Š²ŃŠ“ŠŗŠ° 30-ŠŠŠŠ [@aerobomber]. (2023, February 24). Š”Š¼Š°Š¶ŠµŠ½Ń ŠŗŠ°ŃŠ°ŠæŃŃŠŗŃ Š¼Š°ŠŗŠ°ŠŗŠø ŠæŠ¾Š²ŠµŃŃŠ°ŃŃŃŃŃ Š“Š¾ ŃŠ²Š¾Š³Š¾ ŠæŠµŃŠ²ŃŃŠ½Š¾Š³Š¾ ŃŃŠ°Š½Ń – Š½Š° Š“ŠµŃŠµŠ²Š°, Š½Š° ŃŠ¾ŃŠøŃŠø Š»Š°ŠæŠø Ń Š½Š° ŠæŠµŃŠµŠ³Š½ŃŠ¹. Š ŃŠøŠ¼ ŃŠ°ŃŠ¾Š¼ ŃŃŠøŠ²Š°Ń. [Video]. Telegram.

Vigers, B. (2023, October 25). Ukrainians stand behind war effort despite some fatigue. Gallup.com. Retrieved from n

WarGonzo [@WarGonzo]. (2022, October 12). Š£Š“Š°ŃŃ ŠŠ”Š£ ŠæŠ¾ ŠŠ¾Š½ŠµŃŠŗŃ ŃŃŠ°Š»Šø ŃŃŃŠøŠ½Š¾Š¹ Š²Š¾Š¹Š½Ń Š”ŠµŠ³Š¾Š“Š½Ń Š²ŃŠµ Š¾Š±ŃŃŠ¶Š“Š°ŃŃ ŠæŠ¾Š“ŃŃŠ² ŠŃŃŠ¼ŃŠŗŠ¾Š³Š¾ Š¼Š¾ŃŃŠ°. ŠŠ°, ŃŃŠ¾ Š½Š¾Š²ŃŠ¹ ŠæŠ¾Š²Š¾ŃŠ¾Ń Š² ŃŃŠ¾Š¹ Š²Š¾Š¹Š½Šµ [Video]. Telegram.

WarGonzo [@WarGonzo]. (2023, February 24). ŠŃŠ¾ŠµŠŗŃŃ Ā«Š¢ŃŠøŠ±ŃŠ½Š°Š»Ā» ā Š³Š¾Š“! ŠŃ Š½Š°Ń Š¾Š“ŠøŠ¼ Šø ŠæŃŠ±Š»ŠøŠŗŃŠµŠ¼ ŠøŠ½ŃŠ¾ŃŠ¼Š°ŃŠøŃ Š¾ ŠæŃŠµŃŃŃŠæŠ»ŠµŠ½ŠøŃŃ Šø ŠæŃŃŠŗŠ°Ń Š½Š°ŃŠøŃŃŠ¾Š² ŠøŠ· Ā«ŠŠ·Š¾Š²Š°Ā» Šø ŠŠ”Š£ Š² Š¾ŃŠ½Š¾ŃŠµŠ½ŠøŠø Š¼ŠøŃŠ½ŃŃ [Video]. Telegram.

WarGonzo [@WarGonzo]. (2023, May 20). Š¤Š»Š°Š³ Š½Š°Š“ ŠŃŃŃŠ¼Š¾Š²ŃŠŗŠ¾Š¼/ŠŠ°Ń Š¼ŃŃŠ¾Š¼. [Video]. Telegram.